Modernizing a CI+CD pipeline with Github Actions

Our CI+CD has been working for 5 years long. You know, if it ain’t broken, don’t fix it. But the company is not the same. It’s time to update it!

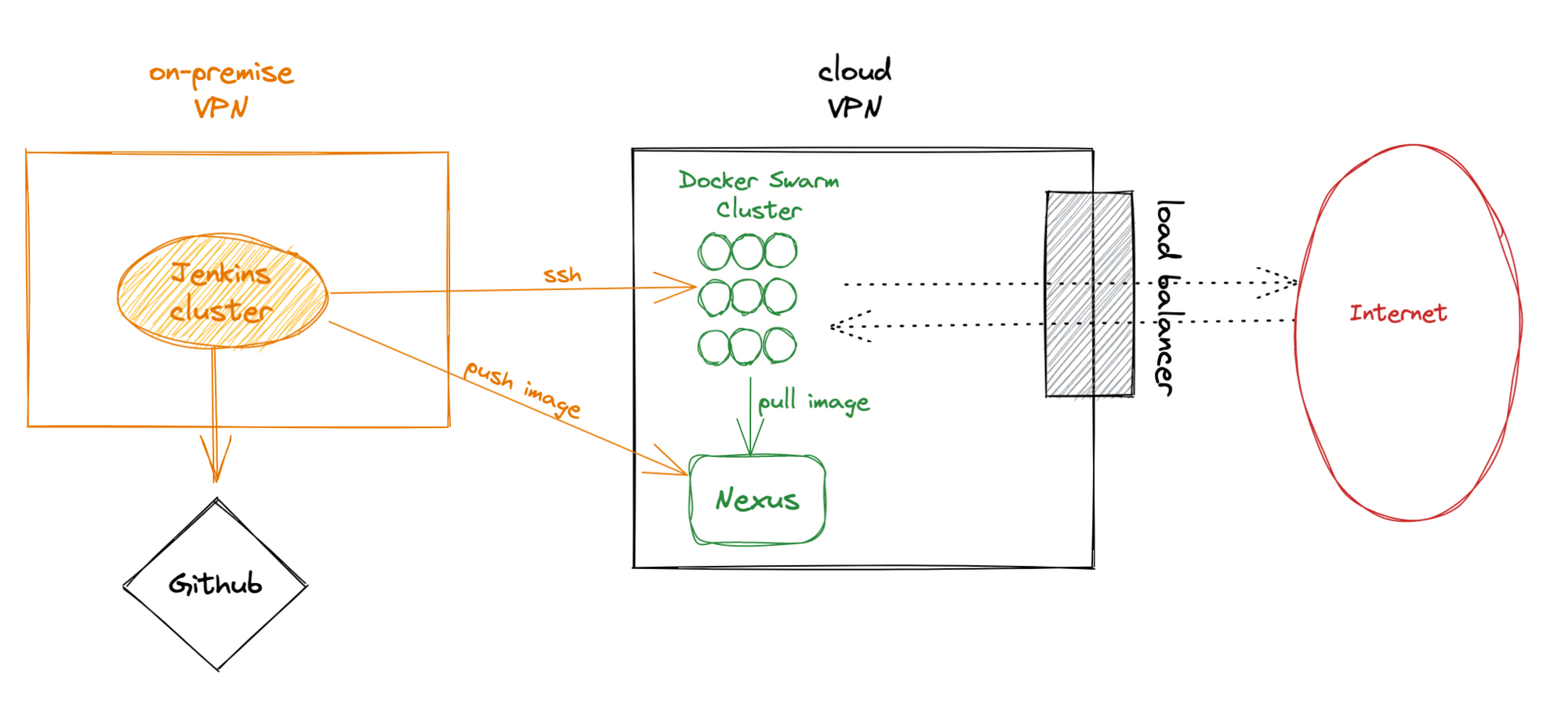

Let me wrap up a bit our current setup. I am going to be brief, I promise. We have a Jenkins cluster on-premise. That is, we manage (and maintain) a bunch of hosts that run Jenkins slaves and a host that runs the master. They are within our on-premise VPN, a reminiscence of our first hosting provider.

Our production backend runs a containerized system on top of a Docker Swarm cluster. Our container registry is Nexus, which allows us to deploy our services in the Swarm.

Both systems run within their own independent VPNs.

Our code repositories are in Github. Our Jenkins is listening for changes in Github. When we merge to develop/production, Jenkins pushes the image to the Nexus, connects via ssh to the managers of the cluster and runs the deployment command (docker stack deploy). We have a common Jenkins pipeline for all our services.

Pros:

- Simple.

- Security on our side (VPN certificates + ssh keys are in our servers).

- Jenkins is commonly known.

- Stable: we haven’t had to change it a lot all this time.

Cons:

- We need to maintain and keep update the Jenkins machines.

- As the team grows, the Jenkins cluster has to grow too to be able to run more jobs.

- We have only configured Java 8 and 11.

- Still on our old hosting provider.

We have been pretty happy with this setup so far. Maintenance is something that we always want to simplify at Playtomic. The fewer systems, the better. Besides, new versions of Java require new versions of the JDK, maven and thus… Jenkins. We have already been using Github Actions in other projects here, so that we know that it could be a fine replacement of Jenkins. The workflow defines the environment it is required to run (for example, the java version or the architecture), so it makes complete sense to use Github Actions.

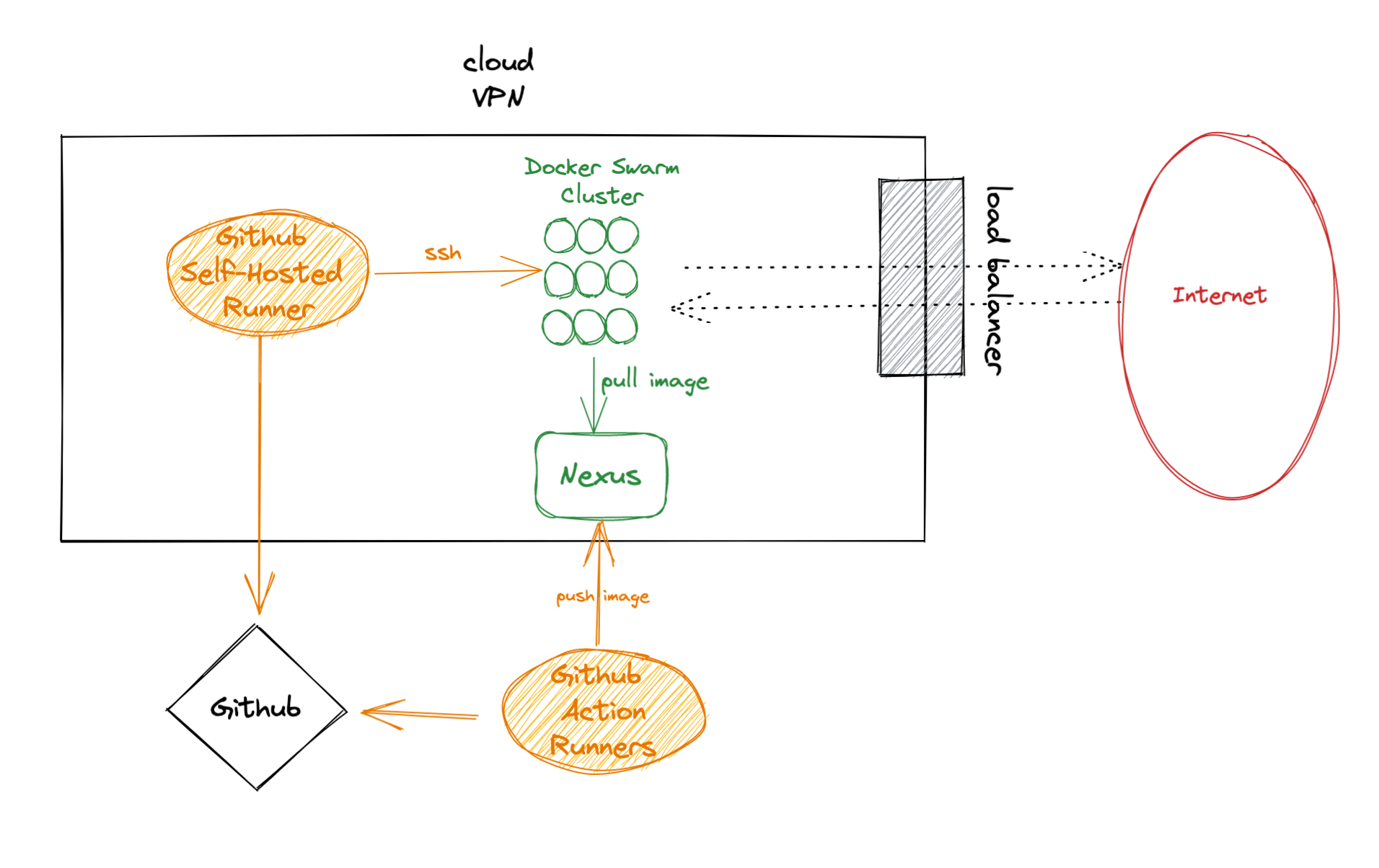

Replacing Jenkins by Github Actions

We re-wrote the Jenkins pipeline as a Github Actions workflow. Our main concerns are two:

- What about security? We are using ssh-keys to access the cluster. We can use Github secrets to store them, but we don’t like having such an important piece of the security stored in a third-party.

- Cost might be a problem in the future. Github Actions are charged by the minute of computation and we have 50 services.

We discovered that Github Actions allow you to run self-hosted nodes… so that’s the solution to both problems. At this very moment, we can afford the minutes that we are spending, so we are adding a host just for deployments. We added a t3.nano, which is pretty cheap. ssh-keys are still 100% under our control, as they are installed in the machine.

Pros:

- Still a common pipeline (workflow) but allows everyone to use new stuff (new java version, different languages, …) without installing more tools in Jenkins.

- Security is still on our side.

Cons:

- We need to monitor the cost.

What’s next?

Containerized Github Action runner

Worried about the cost? If you have a cluster, you can run as several copies as you want of the Github Action runner!

On-demand self-hosted runners

This would be a huge improvement to control the cost while still being able to scale the number of runners: https://github.com/machulav/ec2-github-runner

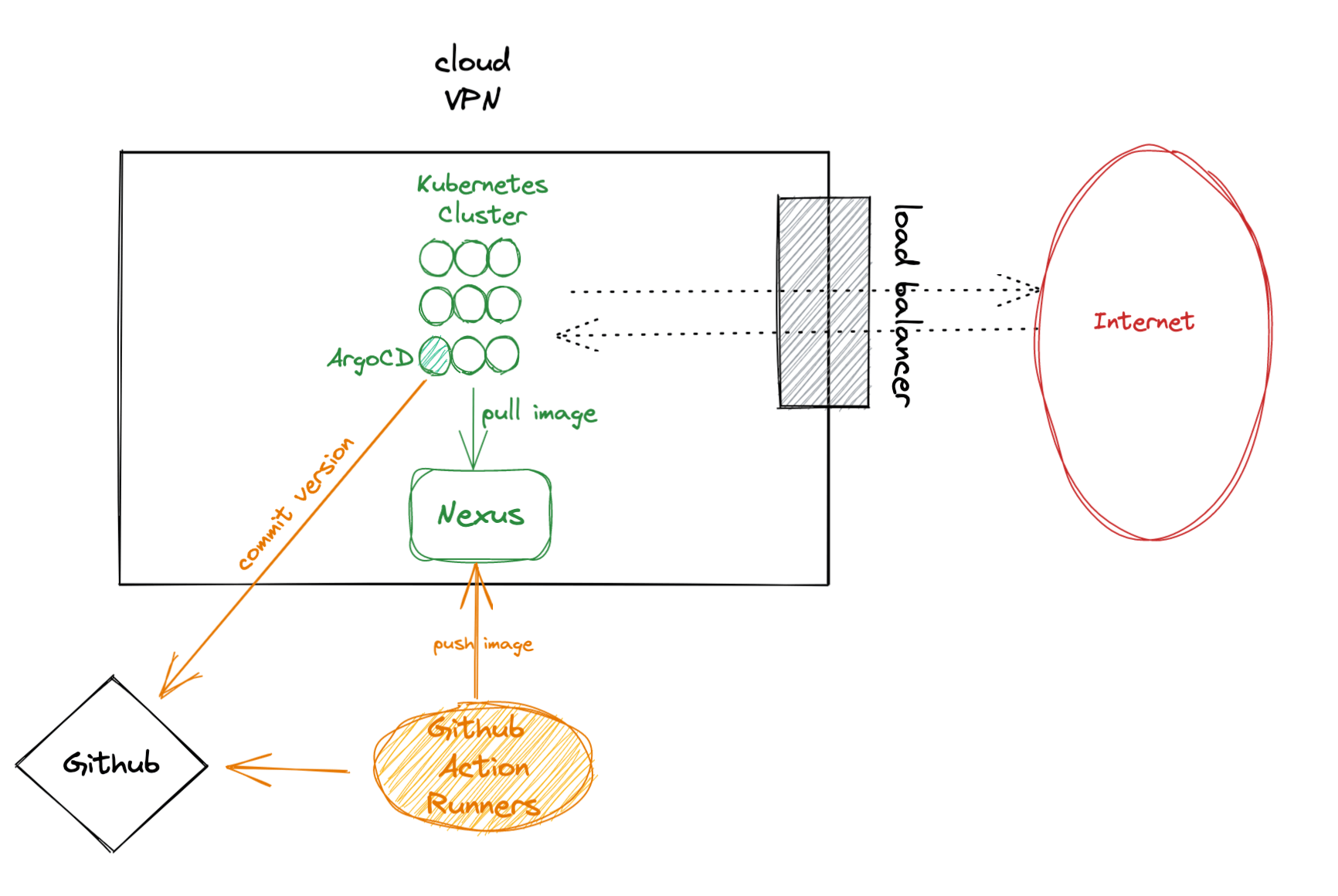

Separate the CI from the CD

To separate pipeline for the CI and CD is a good idea, as their lifecycles are pretty different. In our current setup, the workflow is responsible for running the deployment command. If that fails, then the whole pipeline fails, but the build was successful.

We have already tested ArgoCD in Kubernetes so that the CD is handled by the cluster itself. If you are running Kubernetes, you can already do that with FluxCD or ArgCD

Replace Nexus by Github Packages

Nexus requires a lot of space (as it stores all the containers, libraries, packages, … of your organization). We don’t want to maintain that space, be responsible of the backups, … so that we are considering to migrate to Github Packages too.